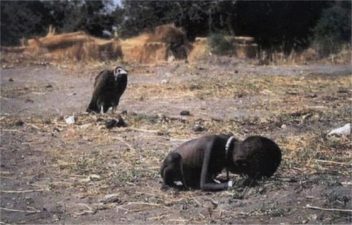

If a tree falls in the forest, does anyone hear? Does your artificial intelligence-driven software even ponder this philosophical paradox? With so much of our world out of whack these days, I mean, you know … you are reading the news. The ice caps are melting. The North Pole is warmer than Bucharest, and famines in Africa are so dire that it hurts to hear more about them. We can easily trace our planetary perils to humanity, a hungry species that has tipped the scales, and has created imbalance in resource allocation. And yet another agtech startup comes along, sometimes with hardware, sometimes with software — or both — claiming they have the answer to world hunger and that it’s called artificial intelligence.

How does that work? Artificial intelligence isn’t a plug and play solution that you can build in a bunker or basement at an MIT weekend hackathon or buy through the Google Play app store. It’s a distinctly specific thing that is architected from day 1 into the company business model and which should be superbly flexible, sophisticated and nuanced in design, application, deployment and oversight.

Now, hundreds of new agriculture companies are springing up building greenhouses, putting sensors into the ground or onto satellites. They are sending out drones and airplanes to collect scads of data from millions of IoT sensors. We know this pattern. It’s the same as what we have done in the past but only faster now. But as eloquent as the technical white papers may promise in these new companies, artificial intelligence is only as good as the people and values creating the rules. It is only as good as the players and stakeholders.

I am going to give you an example. AI is supposed to be a rule-based system. Define, let’s say, what success looks like on a farm, such as ample fertilizer to the tomatoes, quickly-remediated pest infestation, a harvest that’s bountiful, and red. And indeed those sound like reasonable goals. But today when we dream up what we want from or food system, we are setting them with human rules from the West, with our limited understanding of how people and nature behaves.

Developing Nations where most food is farmed is a black box. Nature, which presents infinite complexities is even more vexing. It represents the equivalent of billions of black boxes stacked like the (those Russian dolls…?). We are just beginning to learn how the vast communication networks in forests as species of the same even learn to communicate outside their species “caste” to other unrelated species about where nutrients, water and the sunshine lies. There is a notion that if the forests survives, we all survive. Read the book The Hidden Life of Trees for a primer.

In many ways the way of Nature is to think like a mountain, as famed American environmentalist Aldo Leopold put it. Decades ago, he witnessed the destruction of wild wolves on the mountains of Wisconsin and how their extinction there led to the overpopulation of deer which then destroyed all the trees on the mountain. The mountain was not happy and took decades to recover. Leopold coined the term “mountain thinking” to mean having a complete appreciation for the profound interconnectedness of the elements in our ecosystems. Think like a mountain, rather than an isolated individual.

Today, I see how simplistic the rule-based system is for artificial intelligence and I see who is building these systems: young guys drinking Soylent in Silicon Valley. They are often isolated individuals or worse, profit-only driven enterprises. And we know well how that story has been doing until now… (Psssst .. Monsanto, Syngenta, Dow, Dupont…)

So when we humans are building AI for a planet in peril and when we need to recalibrate our food system, we need to consider all the stakeholders, not just us. What about the trees, the wolves, the deer, the aspen trees, the mountains? The mycelium, the bees, the bacteria that should live in the soil had we not destroyed it by over fertilizing and over pesticiding it? Are they not stakeholders too?

Consider the barriers you as a parent, uncle or auntie might setup up for a toddler. You would just step over the baby gates, right? That’s about how easy it is for a far more intelligent AI than us to bypass the rules and morality we are putting in place. If we don’t think about this now, it might be too late and computers will try to do it for us. We aren’t alone, and we aren’t crazy.

We love Elon Musk, not because he sends cars in space, or builds Boring Companies, we love him mainly because he shares our concerns. That AI not built correctly, will be more dangerous that nuclear warheads in the hands of a Middle East dictator. We desperately need a coalition of bright minds, elders, scientists, naturalists; we need to understand the language of plants, of the wind and the soil, and only then can we begin to lay down the rules of how nature should be governed. Of how AI can be created for the planet.

We believe that using blockchain technologies we can connect billions of people to billions of data points, and together decide unanimously what is morality and what success should look like.

To continue a recent Musk quote at the SXSW 2018, “that AI should maximize the freedom of actions of humanity,” we’d like to add that AI should maximize the freedom of actions of humanity and the natural world. In short, to survive and thrive into the next 100 years we need to go back to a little more like mountain thinking.

—

Karin Kloosterman is the editor of Green Prophet and the founder of Flux, a hardware company putting agriculture on the blockchain.

Karin Kloosterman is the editor of Green Prophet and the founder of Flux, a hardware company putting agriculture on the blockchain.

One thought on “Applying Mountain Thinking to Artificial Intelligence”

Comments are closed.